The Great AI Hardware Crash

Rachel

The United States tech industry is collectively spending upwards of six hundred and fifty billion dollars on artificial intelligence infrastructure this year, but almost half of the data centers planned for 2026 are already facing delays or outright cancellations. We are looking at a week in late April 2026 where the digital revolution is quite literally slamming into a brick wall of physical reality. Malik, the disconnect between software ambition and hardware supply is reaching a breaking point.

Malik

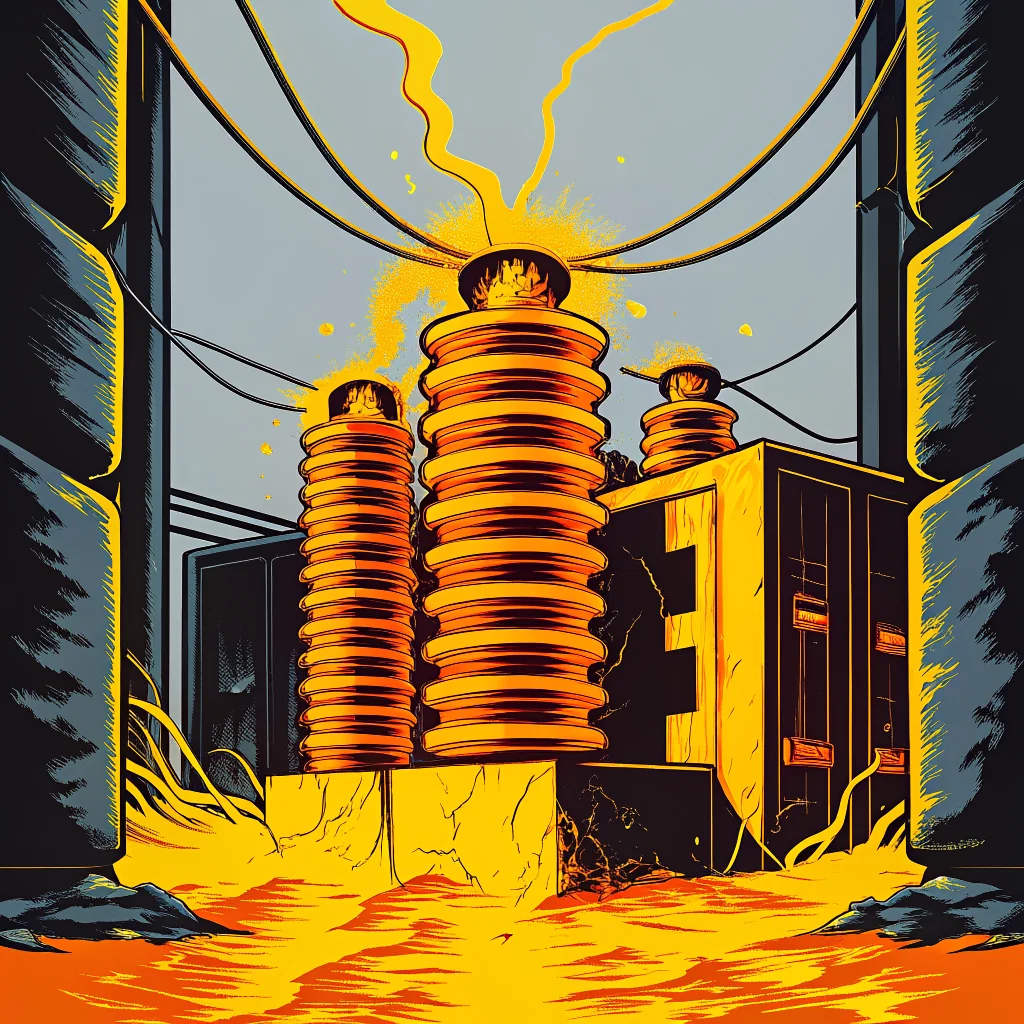

That disconnect is the defining tension of the technology sector right now. Companies like Alphabet, Amazon, Meta, and Microsoft have limitless capital to deploy, but capital cannot bend the laws of physics or repair fractured supply chains. According to reports circulating this week from Wood Mackenzie and Bloomberg, the primary bottleneck is not a lack of advanced microchips. The core problem is fundamental electrical infrastructure. We are seeing severe scarcities of transformers, switchgear, and the massive batteries required to balance power consumption for these hyperscale facilities.

Rachel

Those are industrial components that power grids have relied on for decades. It seems baffling that the brightest minds in Silicon Valley did not accurately model the supply chain for basic voltage control and power distribution before committing hundreds of billions of dollars.

Malik

They modeled historical data, which is exactly why the forecasts failed to account for this gridlock. Before 2020, the delivery timeline for high-capacity transformers was twenty-four to thirty months. Today, power industry surveys show those lead times stretching to upwards of a hundred and twenty-eight weeks, and in some cases, extending to five years. Artificial intelligence data centers operate on an eighteen-month deployment cycle. When the core components require sixty months to procure, the logistical math completely collapses. You have hyperscalers placing equipment orders in April 2026 that will not see delivery until 2030 or 2031.

Rachel

That delay creates a massive vulnerability, not just in business growth, but in national strategy. I read recent data from the US International Trade Commission showing that imports of major electrical equipment have surged drastically, primarily coming from China. That introduces a glaring geopolitical risk. We are attempting to build an artificial intelligence moat while relying on our primary geopolitical rival for the foundational materials.

Malik

The domestic resistance is mounting just as fast as the geopolitical friction. State governments are pushing back against the sheer energy consumption of these facilities. Just this month, the Democrat-controlled legislature in Maine passed a bill to halt any new data center projects requiring at least twenty megawatts of power until late 2027. Maine has some of the highest residential electricity prices in the country, and lawmakers are terrified that hyperscalers will inflate the cost of living even further by draining the grid.

Rachel

Vermont and Georgia are reportedly mulling over similar legislation. It feels like a localized rebellion against big tech. State governments are realizing that hosting a massive server farm drains local water and power grids without necessarily creating long-term local jobs or civic benefits.

Malik

A data center is effectively a very quiet, extremely power-hungry neighbor. If the Maine governor signs that bill, it establishes a formal precedent. It fragments the United States technology landscape into zones that are pro-AI development and zones that are heavily restricted. We must consider the sheer volume of capital trapped in this bottleneck. Alphabet, Amazon, Meta, and Microsoft have planned around six hundred and fifty to seven hundred billion dollars in total infrastructure spending for this year alone. A vast portion of that is earmarked specifically for data centers and Nvidia graphics processing units. Alphabet CEO Sundar Pichai mentioned to investors that they expect to remain supply constrained throughout the entire year despite record capital allocation.

Rachel

It creates a massive logjam for developers. If the hyperscalers cannot build the physical infrastructure to house the servers, the developers cannot rent the compute power. Industry trackers are noting that on-demand GPU rental capacity is severely constrained across all major cloud providers, with lead times for server access hitting fifty-two weeks. Startups are raising millions of dollars in venture capital, only to find they cannot actually buy the compute time necessary to train their models.

Malik

That lack of access is what drives the secondary trend we are seeing globally. When developers cannot access massive compute arrays legally or affordably, they look for technological shortcuts.

Rachel

Which brings us perfectly to the software side of this national security puzzle. While the physical hardware is bottlenecked by transformers, the software models are facing an entirely different kind of leakage. The White House Office of Science and Technology Policy released a memo this month detailing a major shift in the technology war. They are officially targeting a technique called artificial intelligence distillation.

Malik

Distillation is a fascinating workaround to the very hardware constraints we just discussed. For years, the United States maintained a massive advantage by controlling the export of high-end graphics processing units. The strategy was to starve foreign adversaries of the compute power needed to build massive foundation models. Distillation bypasses that hardware requirement entirely.

Rachel

Break down the mechanics of distillation for someone who understands software applications but not the deep intricacies of machine learning architectures.

Malik

Think of a frontier model like OpenAI’s GPT-5.5 or Anthropic’s Claude as a brilliant university professor. Training that professor took billions of dollars, vast server farms, and years of iteration. Distillation happens when you use that professor to tutor a smaller, highly efficient student model. Instead of paying for the expensive training process from scratch, a foreign actor can feed highly complex prompts into the US-based frontier model, harvest the high-quality outputs, and use that synthetic data to train their own localized system.

Rachel

They are essentially pirating the reasoning capabilities of the American models without paying the massive compute tax required for initial training.

Malik

They strip the value without the investment, allowing them to run that smaller student model on much cheaper, widely available hardware. It reduces the compute burden by a factor of a hundred. You no longer need thousands of restricted Nvidia chips to build a capable system. A team with a modest budget and consumer-grade servers can achieve results that cost American companies hundreds of millions of dollars to pioneer. The White House memo noted that foreign actors are deploying tens of thousands of proxies and jailbreaking techniques to systematically harvest this knowledge. The Commerce Department’s Bureau of Industry and Security is now actively trying to figure out how to restrict the use of closed-source model weights.

Rachel

That sounds nearly impossible to enforce effectively. How does the United States government police synthetic data generation across the open internet when bad actors use virtual private networks and proxy servers to disguise their true geographic origin?

Malik

It is an enforcement nightmare. Retired General Paul Nakasone acknowledged the profound difficulty recently, noting that while the government is evaluating restrictions, no clear timeline or technical mechanism is firmly set. They might force American artificial intelligence companies to implement much stricter Know-Your-Customer protocols, essentially vetting the identity of anyone who accesses their application programming interfaces at scale.

Rachel

That creates intense friction for developers. If Microsoft or Google must aggressively monitor API usage to prevent distillation, it inherently slows down the entire domestic innovation ecosystem. Speaking of friction and industry leadership, the human drama behind these models is peaking this week. Jury selection is starting in San Francisco for Elon Musk’s lawsuit against Sam Altman and OpenAI.

Malik

That courtroom showdown is the culmination of a philosophical war over the future of artificial intelligence. Musk, who co-founded OpenAI in 2015 and invested nearly forty million dollars, is accusing Altman of abandoning the organization’s foundational non-profit mission to benefit humanity. Musk argues that OpenAI, which is now valued at roughly eight hundred and fifty-two billion dollars and backed heavily by Microsoft, simply used the non-profit shield to aggregate top-tier talent before pivoting to pure capitalism.

Rachel

The irony is palpable. Musk now runs a competing artificial intelligence company, xAI, pushing his own chatbot named Grok. He is litigating the moral purity of OpenAI while actively trying to crush them in the commercial market. The judge is aiming for a jury decision by late May, but the discovery process alone will expose the internal communications of the most secretive company in Silicon Valley.

Malik

The discovery process is what terrifies corporate boards across the sector. We are going to see emails from 2015 and 2016 detailing how the founders defined benefiting humanity versus achieving market dominance. The market is watching closely because OpenAI is reportedly preparing to go public on the stock market. If a jury determines they breached their foundational promises, the structural fallout could be immense. It forces us to ask whether artificial intelligence should belong to the public domain or to the shareholders of a few massive tech conglomerates.

Rachel

That question of corporate consolidation leads directly into the biggest leadership shakeup of the decade. TechRadar and multiple financial outlets confirmed this week that Tim Cook is stepping down as Apple’s CEO in September. John Ternus, the current head of Hardware Engineering, is officially taking over.

Malik

The timing caught the entire industry off guard. Apple just celebrated its fiftieth birthday, and Cook had previously signaled he was staying for the foreseeable future. Ternus taking the helm is a clear signal that Apple views its hardware ecosystem as its enduring competitive moat. As companies like Google and Microsoft pour all their resources into cloud-based software, Apple is doubling down on the physical devices we interact with daily.

Rachel

But Apple still has to integrate cutting-edge intelligence into that hardware. Cook has managed one of the greatest runs of market capitalization growth in corporate history, relying heavily on steady supply chain mastery and immense stock buybacks. According to Morningstar data released this week, Apple has reduced its share count by over thirteen percent in the past five years. They return cash directly to shareholders while their rivals burn cash on data centers. Alphabet, Microsoft, Amazon, and Meta are all sacrificing short-term profit margins for long-term infrastructure plays.

Malik

That financial strategy works until there is a fundamental paradigm shift. Cook’s legacy is operational perfection. He built the most efficient consumer electronics supply chain in human history. Ternus will have to navigate a world where hardware is increasingly judged by the intelligence of the software running on it. Apple has the capital to compete, but they are playing catch-up in a space moving at a blistering speed. The challenge for Ternus is whether he can pivot a company deeply entrenched in a hardware-first, highly secretive culture toward an ecosystem that requires massive data ingestion and rapid software iteration.

Rachel

To support that iteration, there is a separate hardware development that slipped under the radar amidst the broader data center crisis, speaking directly to our global semiconductor strategy. A Japanese materials manufacturer named Resonac just inaugurated a new research and development center in Silicon Valley. This is the first facility in the United States dedicated entirely to advanced semiconductor-packaging technologies, operating under a consortium called US-JOINT.

Malik

That facility is crucial to the broader picture. When we talk about microchips, we often focus on the nanometer size of the transistors, but packaging is where the real performance gains are currently happening. Advanced packaging allows engineers to stack different chips, or chiplets, closely together to act as a single unit. This is vital for generative AI and autonomous driving applications. Historically, testing these new packaging concepts took six months of back-and-forth transit between the United States and manufacturing hubs in Asia. The US-JOINT consortium aims to reduce that proof-of-concept period to just one month.

Rachel

It represents a tightening of the technological alliance between the United States and Japan. By bringing companies like 3M into the fold to provide core materials like advanced chemical mechanical planarization pads, the consortium is attempting to onshore a highly specialized segment of the semiconductor supply chain. It acts as a deliberate counterweight to the severe vulnerability we face with imported power transformers.

Malik

The entire technological roadmap—from generative AI distillation to advanced chiplet packaging—is trapped in this constant tug-of-war between software innovation and physical constraints.

Rachel

It forces a severe reckoning for the industry. Silicon Valley operates on the principle of exponential growth, but the physical world operates on linear constraints. The events of April 2026 illustrate that tension perfectly. The United States is trying to restrict the export of software model weights because the hardware export bans are being bypassed. Local governments are banning data centers to protect their constituents’ electricity bills. Meanwhile, the technology itself continues to advance, demanding more power, more data, and more infrastructure.

Malik

We are witnessing the end of the frictionless internet era. For thirty years, digital innovation was largely unconstrained by the physical environment. Code scaled infinitely. Artificial intelligence does not scale infinitely. It requires massive physical resources, delicate geopolitical maneuvering, and complex societal compromise. The decisions made in courtrooms regarding OpenAI, in legislative chambers concerning the power grid, and in corporate boardrooms managing CEO transitions this month will dictate the trajectory of this industry for the next decade.

Rachel

The digital revolution has finally collided with the material world. Please send this episode, The Great AI Hardware Crash, to a colleague or a friend who tracks the tech sector.

Episode link -15:32